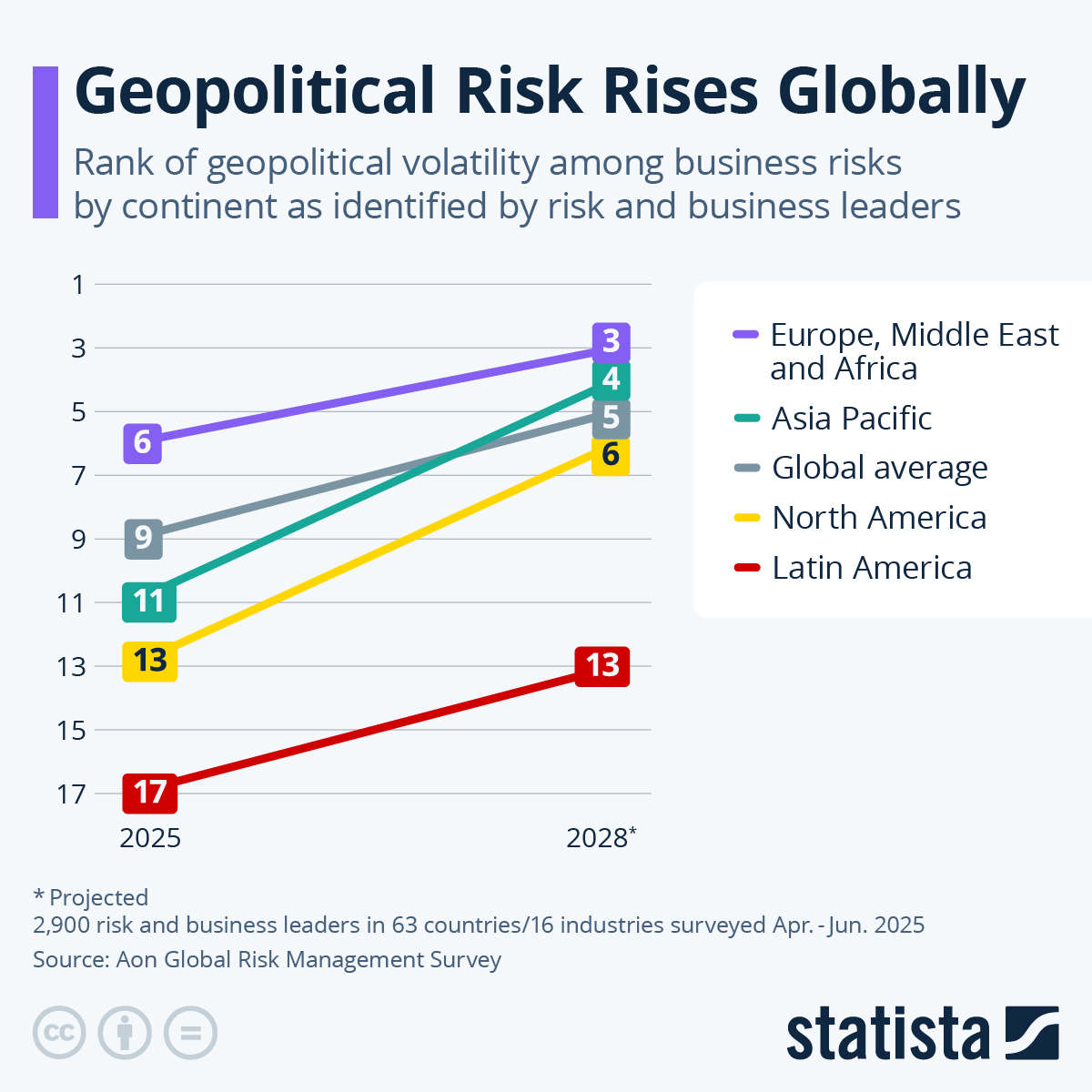

March 18, 2026, marks a breaking point in how we consume visual history. For decades, the "World in Pictures" format served as a reliable, if curated, window into global events. You saw a protest in Paris, a drought in East Africa, or a tech launch in San Francisco, and you accepted the photographic evidence as a baseline reality. That era of passive trust is dead. Today’s news cycle isn't just a collection of captured moments; it is a sophisticated battleground where the "why" behind an image matters more than the pixels themselves.

The primary shift we are witnessing right now is the total collapse of the unedited original. When you look at the major headlines today, you aren't seeing raw photography. You are seeing a heavily filtered, often algorithmically optimized interpretation of global strife and triumph. The industry has moved beyond mere color correction into a space where the emotional resonance of an image is manufactured before it even hits a wire service. Meanwhile, you can find similar stories here: The Cold Truth About Russias Crumbling Power Grid.

The Illusion of Proximity

Most readers believe they are seeing the front lines of global conflict through the eyes of a brave photojournalist. The reality is more clinical. Modern newsrooms now rely on a hybrid of drone-captured footage and satellite imagery that is later "humanized" by editorial teams. This creates a dangerous distance. We are observing the world from a God-eye view, which strips away the visceral, messy reality of human experience.

The Problem With Polish

High-definition sensors have become too good. When a photograph of a natural disaster looks like a cinematic still from a big-budget movie, it triggers a subconscious "uncanny valley" response in the viewer. We stop seeing the suffering and start admiring the composition. This aestheticization of tragedy is not an accident; it is a calculated move to keep engagement high without making the audience feel too uncomfortable to keep scrolling. To explore the full picture, we recommend the recent report by The Washington Post.

If a photo is too gritty, people turn away. If it is too polished, they don't believe it. Finding the middle ground has become the most expensive part of a modern news budget.

The Verification Crisis

We are currently navigating a nightmare of provenance. On this day in 2026, nearly 40% of the images circulating on social media regarding the current border disputes are either partially synthetic or entirely misattributed. This isn't just about "fake news" in the traditional sense. It’s about the subtle manipulation of metadata to make a photo from five years ago appear as if it happened five minutes ago.

How the Fraud Works

- Context Stripping: Taking a genuine photo of a crowd and re-labeling it to fit a specific political narrative.

- Shadow Editing: Using AI to add or remove small details—a flag, a weapon, a specific person—to change the entire meaning of the scene.

- Algorithmic Boosting: Pushing images that provoke high-arousal emotions (anger, fear) while burying nuanced, complex visuals.

The industry likes to talk about "transparency," but very few outlets actually provide a verifiable chain of custody for their visuals. We are expected to trust the brand name, even as the brand names outsource their content gathering to unverified freelancers using generative tools to "fill in the gaps" of a shot.

The Death of the Decisive Moment

Henri Cartier-Bresson famously championed the "decisive moment"—that split second where everything aligns to tell a story. In 2026, we have replaced the decisive moment with the continuous stream.

Cameras are everywhere. Every person at a protest is a walking broadcast station. You would think this would lead to more truth. It doesn't. It leads to a glut of noise that makes it impossible to find the signal. When you have ten thousand angles of the same event, the truth becomes a matter of which angle is the loudest.

The Cost of Free Content

The reason quality photojournalism is in a death spiral is simple: money. Why would a news organization pay a veteran $2,000 plus expenses to go into a hazardous area when they can scrape "eyewitness" photos from social media for free?

The result is a flattened perspective. We see what the algorithm wants us to see, captured by people who may not have the training to understand the context of what they are filming. We are losing the professional eye—the one that knows that what is happening just outside the frame is often more important than what is in the center.

Infrastructure of Deception

Behind the "pictures of the day" lies a massive infrastructure of data centers and moderation farms. Every image you see has been scanned, tagged, and categorized by a machine before a human editor ever touches it. This automation introduces a bias that we are only beginning to understand.

Western-centric bias remains baked into the software. An image of a protest in London is categorized differently than a protest in Nairobi, even if the actions are identical. The software flags certain cultural symbols as "aggressive" while viewing others as "celebratory." This isn't a conspiracy; it's a technical limitation that has massive real-world consequences for how we perceive global stability.

The Rise of the Synthetic Witness

Perhaps the most disturbing trend of early 2026 is the use of "representative imagery." This is the practice of using AI-generated visuals to illustrate a story when no "real" photos are available.

The defense is usually that the image "accurately reflects the spirit" of the event. This is a lie. An image that didn't happen can never reflect the spirit of a real event. It is a fabrication that erodes the foundation of the historical record. If we start accepting "close enough" as a standard for news, we lose the ability to hold power to account. You cannot subpoena a hallucination.

Reclaiming the Lens

The solution isn't more technology. It isn't a better "deepfake detector" or a more complex blockchain verification system. Those are just more layers of the same problem.

The fix is a return to radical accountability. News organizations need to stop treating photography as a commodity and start treating it as a legal document. This means:

- Full Metadata Disclosure: Showing exactly what camera was used, where the photographer was standing, and every single edit made to the file.

- Mandatory Human Sourcing: A strict ban on "representative" synthetic imagery in any context labeled as news.

- Decentralized Funding: Supporting independent collectives that own their equipment and their archives, rather than relying on giant corporate aggregators.

We are currently drowning in a sea of beautiful, high-resolution lies. The images of March 18, 2026, should be a wake-up call. If you can't tell the difference between a captured moment and a manufactured one, you aren't an informed citizen. You're just a consumer of a very expensive fiction.

Stop looking at the quality of the light and start looking at the source of the data.

Check the digital signature on the next "viral" image you share.