The traditional employment contract is predicated on the exchange of human time for fiat currency, a model that fails when the primary driver of value shifts from hourly output to the orchestration of autonomous digital systems. Jensen Huang’s proposal to integrate "AI tokens" into compensation structures is not a mere perk; it is a fundamental restructuring of the labor cost function. By shifting from a fixed-salary model to one that incentivizes the deployment and optimization of AI agents, enterprises are attempting to solve the scalability bottleneck of human management.

The Mechanics of Tokenized Compensation

To understand why a CEO would pitch AI tokens as a salary component, one must first define the unit of value. In the current compute economy, a token represents a discrete unit of text or data processed by a Large Language Model (LLM). When an employee is granted "tokens," they are essentially being granted "compute equity"—the right to deploy a specific amount of intelligence-generating capacity without personal capital expenditure.

This shift introduces a new tripartite structure for labor value:

- Base Salary: Payment for the employee’s domain expertise and strategic oversight.

- Compute Allocation (Tokens): The raw material required for the employee to scale their individual productivity through agents.

- Performance Alpha: The value created when an employee optimizes an AI agent to perform a task at a lower cost or higher speed than a human peer.

The logic follows that if an engineer manages 100 AI agents, their impact is no longer tethered to their 40-hour work week. The tokens act as a lever. Without them, the employee is a manual laborer; with them, they are a floor manager of a digital factory.

The Agentic Productivity Frontier

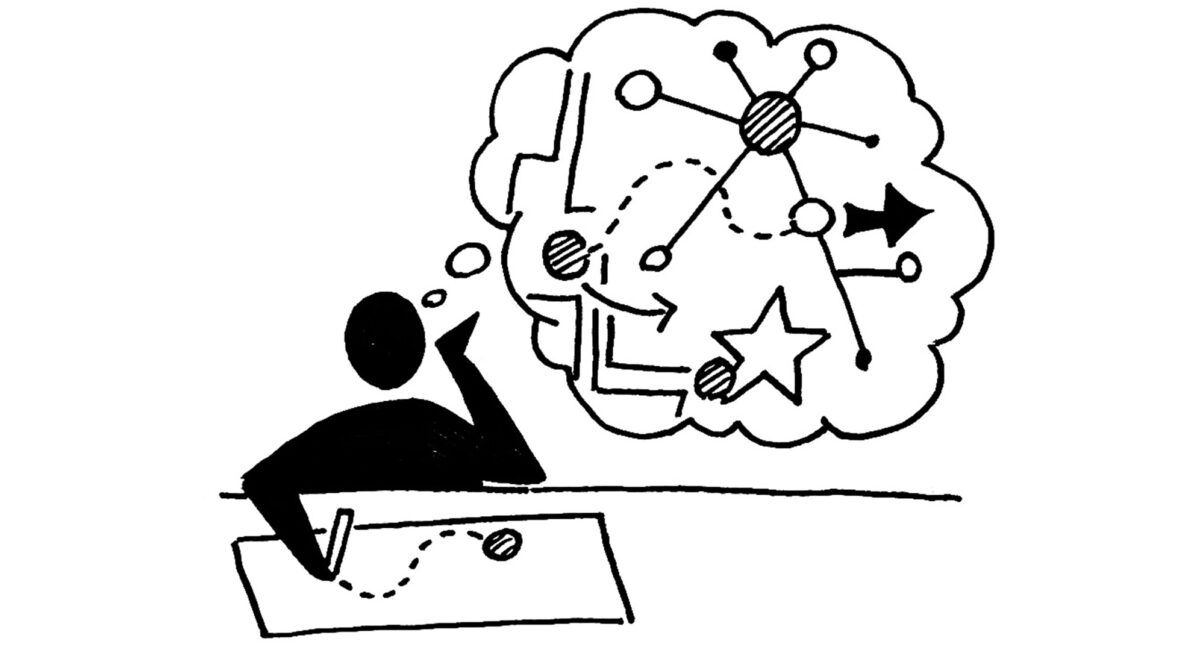

The transition from "AI as a tool" to "AI as an agent" changes the nature of work from execution to delegation. A tool, such as a calculator or a basic LLM interface, requires constant human input (the "Human-in-the-Loop" model). An agent operates on a "Human-on-the-Loop" basis, where a goal is set, and the agent determines the path to execution, handles sub-tasks, and only checks back for high-level approvals.

This creates a new economic reality: The Marginal Cost of Intelligence (MCI). In a pre-agent world, doubling the output of a marketing department required doubling the headcount. In an agentic world, doubling output requires a marginal increase in token consumption and a static amount of human oversight.

The Three Pillars of Agentic Integration

For a firm to successfully implement Huang’s vision, it must move beyond the novelty of chatbots and toward a structured architectural deployment.

1. The Orchestration Layer

Employees will no longer write code or copy; they will write "agentic workflows." This involves defining the boundaries of an agent's autonomy. The value of a worker will be measured by their ability to prevent "agentic drift"—where an AI loses track of the objective function—and their ability to chain multiple agents together to solve multi-step problems.

2. The Compute-to-Value Ratio

Enterprises must quantify the ROI of every token spent. If an employee uses 1,000,000 tokens to generate a report that saves two hours of human labor, the firm must calculate whether the cost of those tokens is lower than the hourly loaded cost of that human. Tokenized salary ensures the employee has "skin in the game" regarding efficiency. If tokens are a finite resource part of their package, they are incentivized to use more efficient models (e.g., GPT-4o-mini vs. GPT-4o) for simple tasks.

3. Intellectual Property Recapture

When an employee trains an agent to perform their specific job functions, the "knowledge" of the employee is distilled into the agent’s prompt engineering and memory. This creates a tension: does the agent belong to the employee or the firm? Huang’s model suggests a symbiotic ownership where the employee uses the company’s compute (tokens) to build these assets, effectively increasing their own "agentic output" while the company retains the infrastructure.

The Displacement Paradox

The primary criticism of AI agents is the potential for mass displacement. However, the data-driven view suggests a shift in the labor demand curve rather than its total collapse. Historically, when the cost of a basic input drops (in this case, basic reasoning), the demand for that input increases exponentially, creating new roles to manage the surplus.

We are seeing the emergence of the Agent Architect. This role requires:

- Systemic Logic: Understanding how different AI models interact.

- Verification Expertise: The ability to audit AI output for hallucinations or logic errors.

- Resource Management: Balancing the token budget against the project deadline.

The "salary plus tokens" model serves as a bridge during this transition. It compensates the human for their shrinking role in execution while empowering them to dominate the new role of orchestration.

The Cost Function of Human-Agent Collaboration

The total cost of a task in this new economy can be expressed by the following logic:

$$C_{total} = (T_{human} \times R_{human}) + (K_{tokens} \times P_{token}) + O_{compute}$$

Where:

- $T_{human}$ is the time a human spends overseeing the agent.

- $R_{human}$ is the human's hourly rate.

- $K_{tokens}$ is the volume of tokens consumed.

- $P_{token}$ is the price per token.

- $O_{compute}$ is the fixed overhead of the infrastructure.

Profitability is achieved only when the agent reduces $T_{human}$ by a factor greater than the cost of $(K_{tokens} \times P_{token})$. If an employee is unskilled at managing agents, they will consume high amounts of tokens with low time-savings, making them a net loss for the organization. This explains why Nvidia and other tech leaders are desperate to gamify token usage through compensation; it forces the workforce to undergo a rapid, self-directed upskilling process.

Risks and Systemic Limitations

The transition to a tokenized labor force is not without friction. Several structural bottlenecks remain:

- Context Window Constraints: While agents can perform tasks, their "memory" is limited by the context window of the underlying model. Complex projects that require months of historical context still require human cognitive continuity.

- The Hallucination Tax: Every minute a human spends verifying an agent's work is a minute where the $R_{human}$ cost is accruing. If an agent is only 80% accurate, the "tax" of verification may negate the token-based efficiency.

- Data Silos: Agents are only as effective as the data they can access. Most companies have fragmented data architectures that prevent agents from performing cross-departmental tasks.

Strategic Execution for the Enterprise

Organizations looking to adopt this model should not start by issuing tokens. They should start by defining "Agent-Ready Roles."

Identify departments where the ratio of "Decision Making" to "Repetitive Execution" is low. These are the prime candidates for tokenized labor. In these sectors, the human role should be redefined as a Quality Assurance Lead for AI Systems.

The compensation structure should reflect this by pinning a portion of the bonus structure to "Autonomous Throughput"—the amount of work completed by agents under a human’s purview with zero-error rates. This creates a direct incentive for the employee to not just "use AI," but to build robust, reliable systems that function without constant intervention.

The ultimate competitive advantage in the next decade will not belong to the company with the most employees, nor the company with the most AI. It will belong to the company that most effectively bridges the two through a compensation model that treats compute as currency and humans as architects of autonomous scale.